Multi-Session

Playtests

See what sticks when players come back and what quietly fades. Bring the same players back across a few focused sessions to validate learning, pacing, and consistency beyond first impressions.

Check what players remember between sessions

Validate tutorials, early progression, and feature adoption

Spot friction that repeats, not just first-session noise

Test builds without long-term commitment

Engagement Isn't a Moment. It's the Connected Experience.

A game can test well in a single session, yet break when players return. Multi-Session Playtests reveal how players re-enter, re-learn, and re-engage across a defined set of sessions exposing friction that only appears between plays, not within one.

Whether players remember systems or need to relearn them

How motivation changes once guidance and novelty reduce

Which mechanics feel clearer, deeper, or more tedious across sessions

Whether progression pacing matches player expectations

Test the First-Session Moments That Set Retention

Tutorial recall

Early progression pacing

Feature adoption and drop-off

Session-to-session clarity

Mid-game UI and system depth

Perceived progress and payoff

Turn Return Sessions Into Clear Direction

Multi-Session Playtests on Lysto are built to capture structured return gameplay experience without manual follow-ups or fragmented insights.

Define the sessions

Choose how many times players return and what each session focuses on. Tutorial mastery, feature depth, pacing, or progression checkpoints.

Share your build

Upload live builds, test builds, or evolving prototypes. No special setup required.

Watch players play

Players record each session unmoderated, thinking aloud as memory, strategy, and expectations evolve.

Shape the next move

Compare sessions side by side to see what improves, what breaks, and what never quite lands.

Run playtests on your schedule, with players around the world, 24 x 7

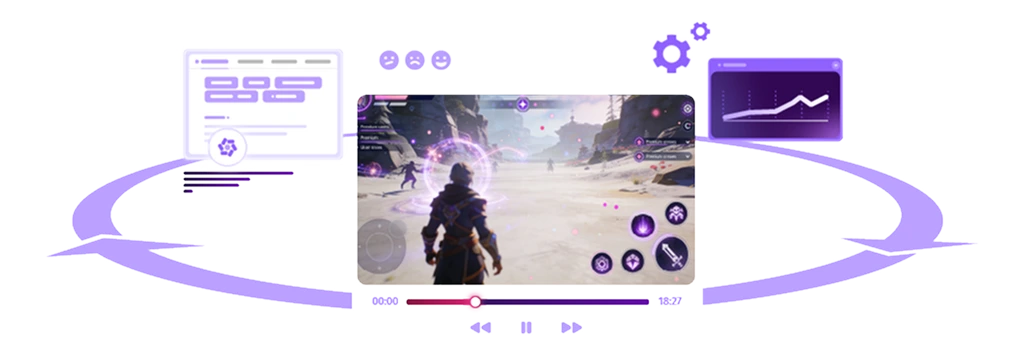

Clear, Actionable View of How

Players Navigate Your Game

Lysto converts scattered gameplay reactions into an organized model of how players think, feel, and behave. And when needed, our game user research experts can help you interpret complex patterns with genre specific insight.

Key Moments Identified

Confusion, hesitation, friction, delight, clarity shifts.

AI Annotated Gameplay

Timestamped clips showing exactly where players get blocked or excited.

Emotional Signals

Tone, sentiment, and reaction cues aligned with gameplay events.

Player Commentary

Layered audio insights revealing expectations and mental models.

Cross-Player Patterns

AI automates clustering of recurring issues and shared experiences.

Survey Integration

Attitudinal signals combined with behavioural data.

Secure Player Access

NDA-bound, strictly qualified players ensure early concepts and builds stay protected.

Frequently Asked Questions

A multi-session playtest involves the same players returning for two to four gameplay sessions, spaced at short intervals, often within the same day. This format helps teams understand how player experience changes after repeated exposure, capturing insights that a single session cannot.

Multi-session playtests are especially useful when evaluating learning across sessions, tutorial recall, re-entry friction, progression pacing, and how player confidence and understanding evolve between plays. They allow teams to observe how players adapt, apply prior learnings, and navigate the game beyond the first session.

This approach is well suited for games with more complex systems or progression mechanics, where understanding short-term learning and experience flow is critical.

A multi-session playtest is best used when you want to understand how player experience evolves across repeated gameplay sessions within a short timeframe.

It is especially useful when testing mechanics or systems that require learning, evaluating whether players retain and apply tutorial learnings when they return, identifying re-entry friction between sessions, or assessing progression pacing and experience flow beyond the first play session.

Multi-session playtests are well suited for scenarios where a single session is not enough to capture how players adapt, gain confidence, and interact with the game over consecutive sessions.

A single session playtest gathers player feedback within one continuous gameplay session, typically lasting 30–60 minutes. It is best suited for evaluating FTUE, onboarding, usability, and specific features.

A multi-session playtest involves the same players returning for multiple gameplay sessions over short intervals, often within one to two days. This format helps teams understand how player confidence, learning, and experience evolve between sessions, including re-entry friction and application of prior learnings.

The choice between the two depends on your playtesting goals.

A multi-session playtest involves the same players completing multiple gameplay sessions with set intervals between them, typically within one to two days. It is designed to evaluate learning, player confidence, re-entry experience, and short-term progression across consecutive sessions.

A longitudinal playtest, on the other hand, spans a longer period of time, often several days or weeks. It is used to understand longer-term player behaviour, such as habit formation, engagement patterns and retention levels, progression over time, and how the experience holds up beyond the initial learning phase.

At Lysto, our Expert User Researcher team supports game studios in designing and running playtests aligned with their research goals.

Most multi-session playtests involve two to four gameplay sessions per player. This range is usually sufficient to observe how players learn, return to the game, and adjust their behaviour across sessions.

The exact number of sessions depends on your research goals and what you are testing. Simpler mechanics or tutorial validation may require fewer sessions, while more complex systems or progression flows may benefit from additional sessions.

If you need help deciding the right number of sessions, our Expert User Researcher team can guide you based on your playtesting objectives.

For most multi-session playtests, 6–10 players are typically sufficient to identify patterns in learning, re-entry experience, and short-term progression across sessions.

If you are testing more complex systems, multiple features, or different player segments, you may consider increasing the number of players to 10–15 for stronger validation.

The ideal number of players depends on your research goals and the complexity of what is being evaluated. If needed, our Expert User Researcher team can help you determine the right number of playtesters for your study.

Yes. With Lysto, teams can define session intervals, player instructions, and tasks for each session, recruit the right players, and securely share builds. Gameplay, player feedback, and session data are captured, structured and analyzed across all sessions, making it easy to review changes in player behaviour across multiple sessions.

Multi-session playtests are not typically used to analyse retention in the traditional sense. Their primary purpose is to understand short-term learning, re-entry experience, and how players adapt across consecutive sessions.

However, teams can still observe return behaviour, such as whether players come back for scheduled sessions and complete assigned tasks. These signals provide context around short-term continuity of experience rather than long-term retention metrics.

At Lysto, we handle the heavy lifting throughout the playtesting process. Once your playtest is set up and player criteria are defined, we take care of recruiting the right players, managing and conducting the sessions, and ensuring participants return as scheduled for multi-session studies.

All gameplay, feedback, and session data are captured, structured, and analyzed within our Player Experience (Px) platform, giving your team organized insights without the overhead of manual coordination.

Depending on how the study is set up, you receive gameplay recordings from each session, think-aloud player commentary, audio transcripts, and session-wise completion data. You can also collect post-session or final survey responses to capture player reflections at different points in the experience. Once the playtest concludes, all gameplay recordings are structured, grouped, and analyzed by our AI to help teams make decisions quickly and iterate faster.

By reviewing data across sessions, teams can identify changes in player behaviour, confidence, task completion, progression flow, and re-entry experience. If included, AI-generated insights and research reports help summarize patterns and translate findings into actionable design recommendations.